Platform

Data Research

Visualize, search, and analyze every mission across your fleet at petabyte scale. Debug failures in minutes, find edge cases across massive multi-modal datasets, and export any scenario directly as training data.

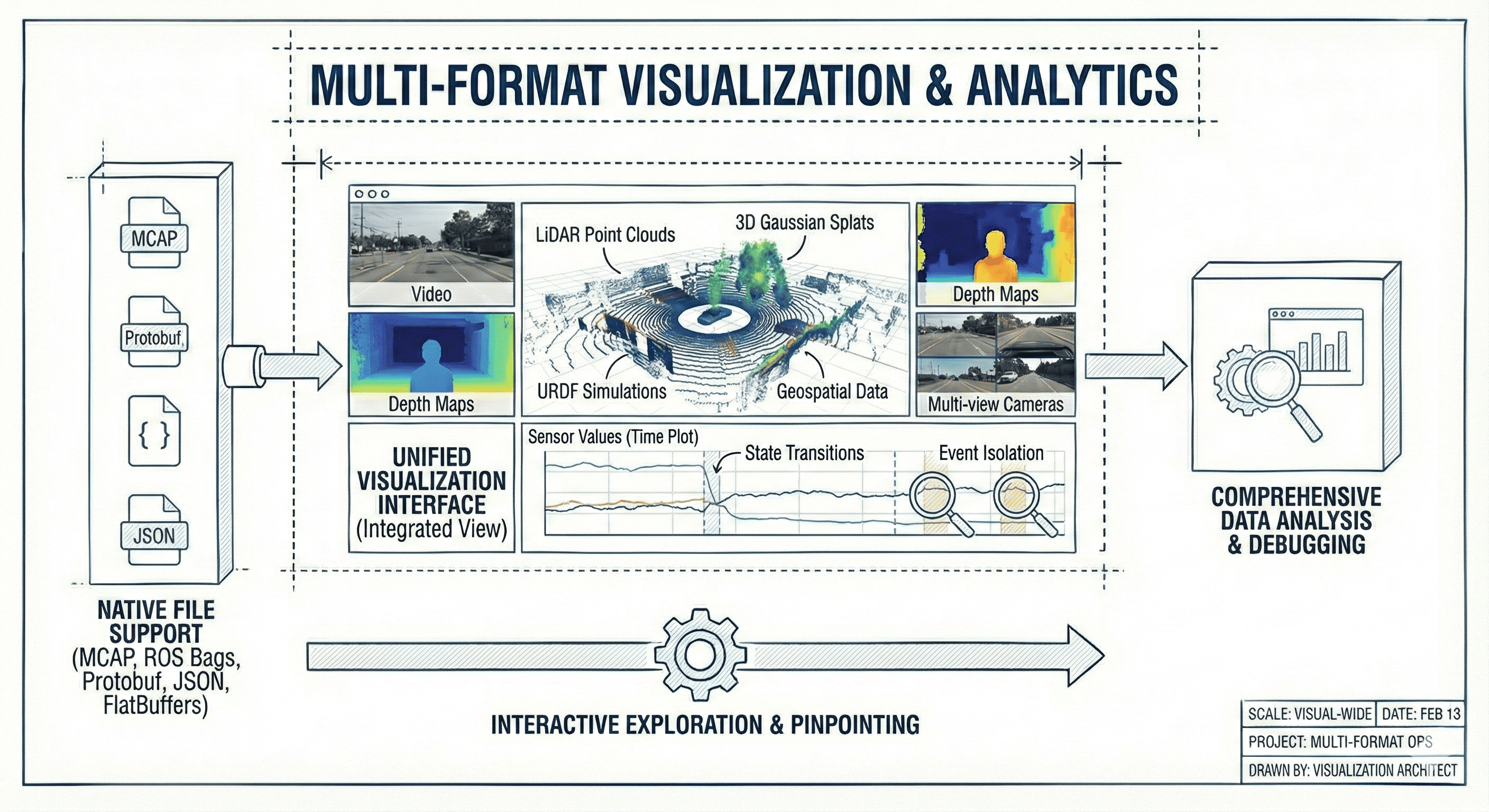

Multi-format visualization

Render LiDAR point clouds, video, 3D Gaussian splats, geospatial data, sensor values, URDF simulations, depth maps, and multi-view cameras in a single interface. Plot message values over time, pinpoint state transitions, and isolate events of interest. Native support for MCAP, ROS bags, Protobuf, JSON, and FlatBuffers.

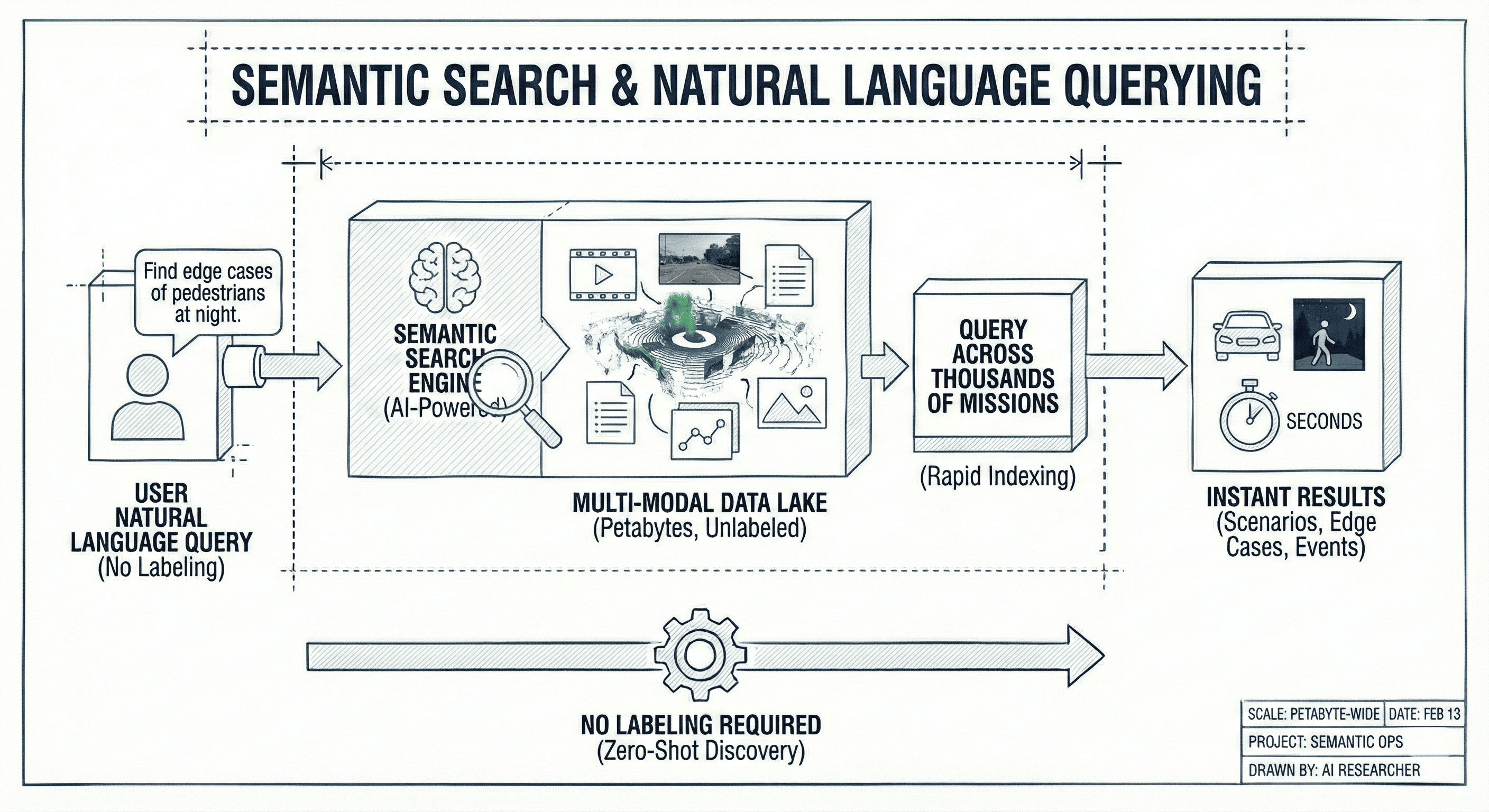

Semantic search

Query across thousands of missions in natural language with no labeling required. Search petabytes of multi-modal data to find specific scenarios, edge cases, and events of interest in seconds.

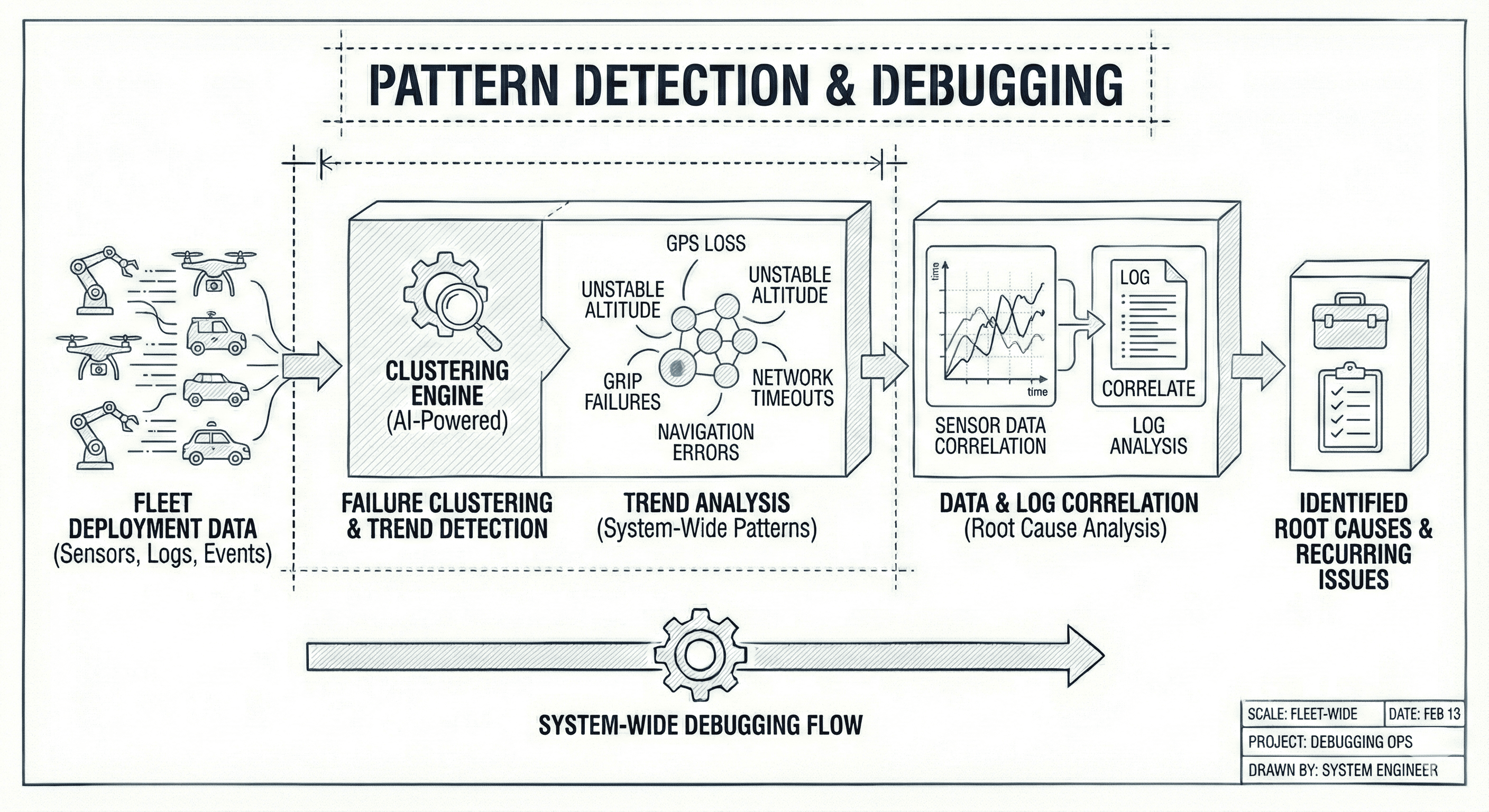

Pattern detection & debugging

Cluster similar failures and detect trends across your fleet such as GPS loss, unstable altitude, grip failures, network timeouts, navigation errors. Correlate sensor data with logs to identify root causes and recurring issues.

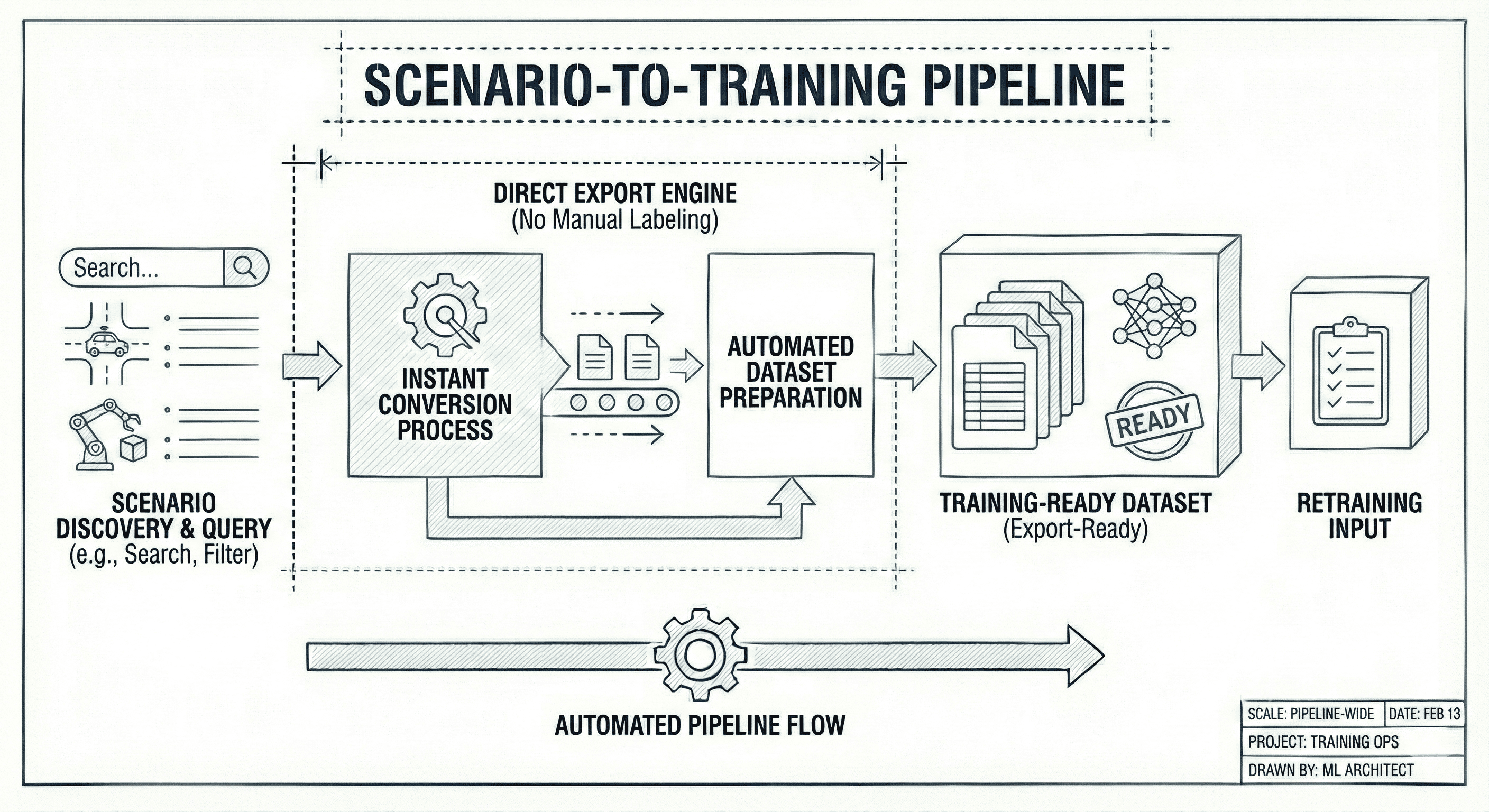

Scenario-to-training pipeline

Export any scenario directly as a training dataset with no additional labeling. Every query you run produces export-ready training data without any manual step between discovery and retraining.

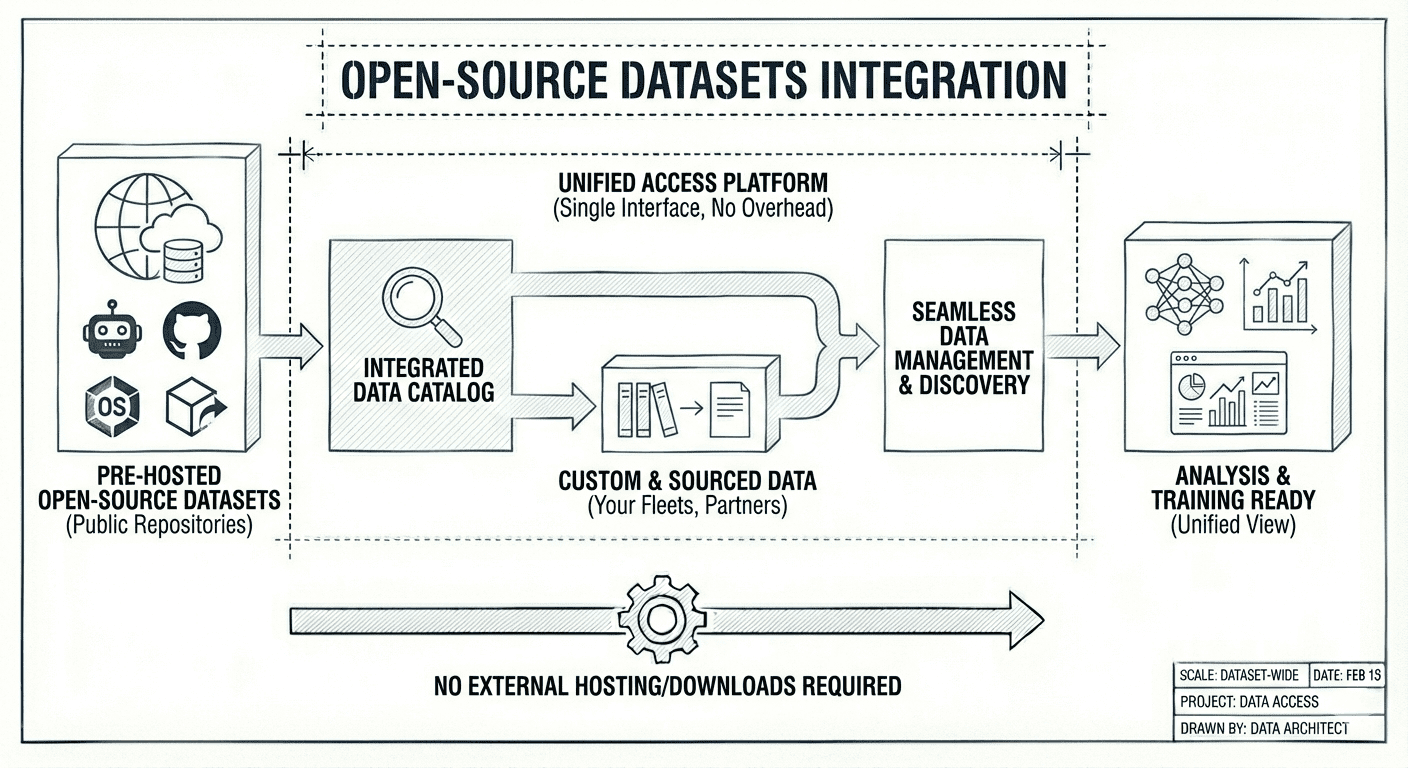

Open-source datasets

Access pre-hosted open-source robotics datasets alongside your custom and sourced data. No overhead of finding, downloading, or hosting external datasets separately.